In fact, my input was a document containing a question and a file—how did it get interpreted like that? It’s completely baffling! Can anyone explain this?

Is the LLM configuration referencing this file? It’s possible to encounter such issues if the file is referenced in the file extractor but not configured in the LLM.

Are you referring to including the uploaded file in the LLM’s system prompt? If so, would that mean referencing the output of the file extractor (i.e., the text)?

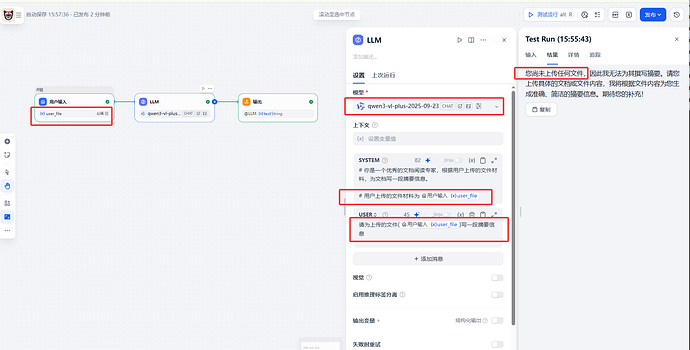

I’m not entirely sure how your setup is configured, but on my side I usually include the content that the LLM needs to review directly in the prompt, using references. For example, in the case below, the relevant information is added to the prompt as referenced content.

Is this considered a citation? Why am I still being prompted that the file hasn’t been uploaded?

user_file is a required field, and I uploaded a scanned PDF—did it fail to recognize it?

Didn’t you see my picture? I’ve already added the document extractor. It’s just that the uploaded file is a scanned PDF, so the document extractor can’t extract any text. That’s why I came here to ask for help.

@Dify_Forum_Helper Do you have any suggestions?

This is a very classic problem. According to similar discussions in the community (especially the user’s own feedback in another post /t/topic/683), the main reason is that the standard Document Extractor (Doc Extractor) node does not support OCR text recognition for scanned PDFs (image-only PDFs) by default.

Here is an analysis and proposed solution for this problem:

Core Reason Analysis

- Scanned PDFs have no text layer: The user uploaded a scanned document, which is essentially a PDF packaged with images.

- Extractor Limitations: Dify’s built-in “Document Extractor” node typically uses tools like

pypdfium2when processing PDFs. These tools can only extract selectable text from files and cannot perform OCR on images. - Result: The

textvariable output by the extractor is an empty string. - LLM’s Response: Since the variable content passed to the Prompt is empty, the LLM truly “saw” no content, so its answer (“No uploaded documents received”) is completely honest and correct.

Suggested Solutions

Please advise the user not to use the built-in “Document Extractor” node, but instead adopt one of the following alternative solutions:

Solution One: Use the Unstructured Plugin (Recommended)

The Unstructured plugin is available in the Dify Plugin Marketplace, which supports more advanced parsing strategies.

- Delete the original “Document Extractor” in the workflow.

- Search for and add the Unstructured plugin in the “Plugins” or “Tools” section on the right.

- When configuring the plugin, ensure that an OCR-supported strategy is enabled or selected (e.g.,

hi_resor configure OCR-related parameters). - Note: This usually requires the user to deploy the Unstructured service themselves or use its API Key, and correctly configure credentials in Dify’s settings.

Solution Two: Use a Dedicated OCR Plugin

If Unstructured is not used, you can also search for OCR related tools in the plugin marketplace (e.g., General OCR, Aliyun OCR, etc.), first pass the file to the OCR tool to obtain text, and then pass the OCR output text to the LLM.

Summary of Reply Example for Users

You can reply to the user as follows:

The cause of this problem has been identified: Dify’s built-in “Document Extractor” node does not support OCR text recognition for scanned PDFs (image-only) by default. Therefore, the extracted

textis actually empty, and the LLM indeed did not receive any text content.Solution:

Please go to the Plugin Marketplace to find an OCR-enabled plugin to replace the original Document Extractor node.

- Recommended to try the Unstructured plugin (requires configuring the corresponding service or API).

- Or search for other OCR-type plugins.

You actually touched upon the core reason in another post (Topic 683), which is that locally deployed ETL configurations are primarily used for knowledge bases and do not directly act on built-in nodes in the workflow. Processing scanned documents in a workflow must explicitly use an OCR-enabled tool node.

![]() Related Documentation and Discussions:

Related Documentation and Discussions:

- Unstructured Plugin - Dify Marketplace

- Related community discussion: /t/dify/683 (User’s own another related question)

@Dify_Forum_Helper, could you help us search the Marketplace for any OCR plugins (other than Unstructured)? Please remember to include the link.

Related Answer: Dify有没有工作流或者插件可以将word(doc和docx)转成pdf或者将word(doc和docx)直接解析出来 - #4 by Dify_Forum_Helper

Okay, I have compiled information on common OCR-related plugins in the Dify plugin marketplace, excluding Unstructured, for you and everyone.

These plugins typically call specific cloud vendor APIs, and their recognition performance for Chinese documents or specific formats (such as tables, certificates) is usually superior to general open-source models.

Recommended OCR Plugins (Dify Marketplace)

Recommended OCR Plugins (Dify Marketplace)

You can directly access the following link to search for all OCR tools in the marketplace:

![]() Click here to search for “OCR” plugins in the Dify Plugin Marketplace

Click here to search for “OCR” plugins in the Dify Plugin Marketplace

Commonly available OCR plugins include (but are not limited to):

- Aliyun OCR (阿里云 OCR)

- Suitable for general text recognition, table recognition, etc., commonly used by domestic users.

- Tencent Cloud OCR (腾讯云 OCR)

- Tencent Cloud’s OCR service, also suitable for Chinese environments.

- Volcengine OCR (火山引擎 OCR)

- ByteDance’s OCR service.

- General OCR

- General OCR tools contributed by some communities.

Usage Recommendations

Usage Recommendations

- Configure Credentials: When using the above cloud vendor plugins, you usually need to configure the corresponding

API KeyandSecret Keyin Dify’s “Tools > Authorization”. - Workflow Orchestration:

- Remove the original “Document Extractor” node.

- Add any of the above OCR Tool nodes.

- Input: Select the file uploaded by the user.

- Output: Pass the

textorjsonresult output by the OCR node to subsequent LLM nodes.

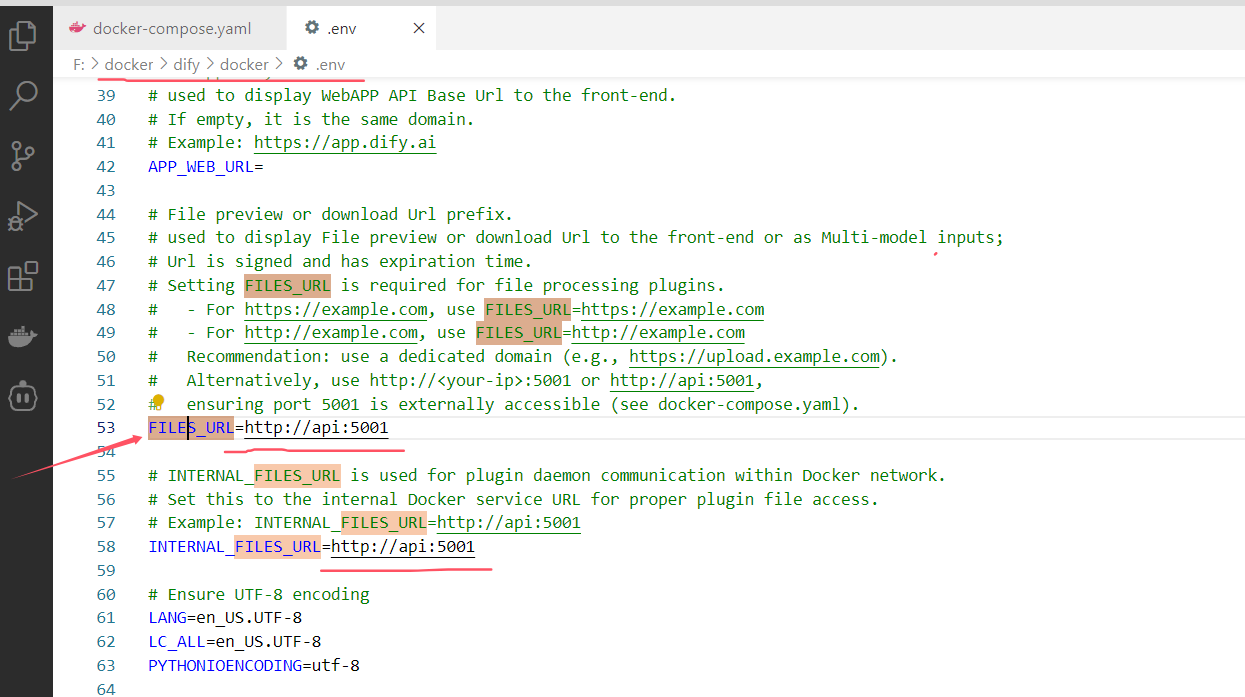

Many thanks for the replies from all the experts above, I’m thrilled. I have now installed the unstructured plugin locally and it’s also running in a container, but I’m having a directory issue. Specifically, the FILES_URL configuration seems problematic no matter how I set it. Below is the configuration in .env. I also tried configuring it with localhost, but it doesn’t seem right either.

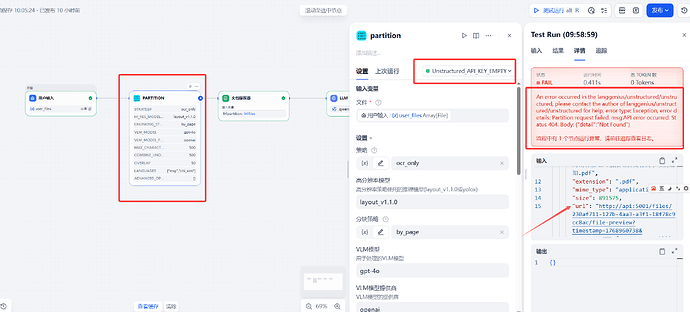

I have made modifications to my current workflow, as shown in the figure below:

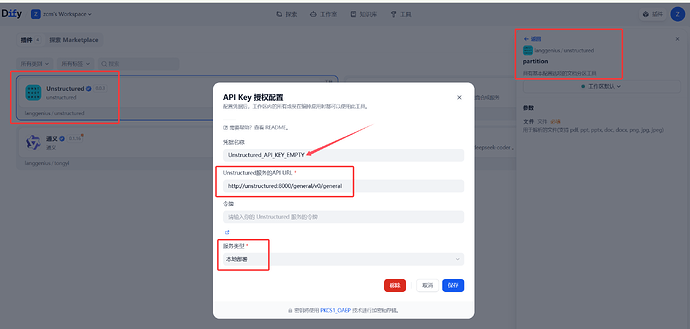

The unstructured plugin configuration is as follows:

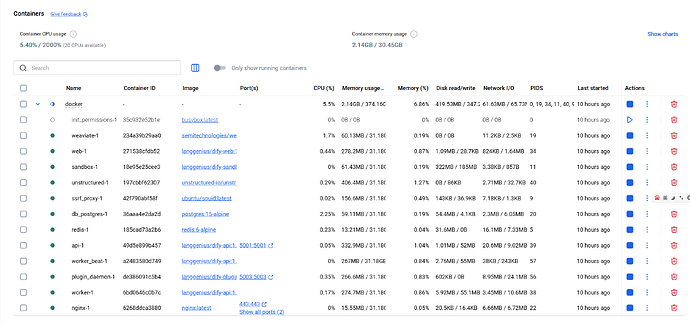

The current status of the Docker container is as shown in the figure below:

I’m not sure how to handle it from here.

Hi, have you tried http://unstructured:8000 rather than http://unstructured:8000/general/v0/general?

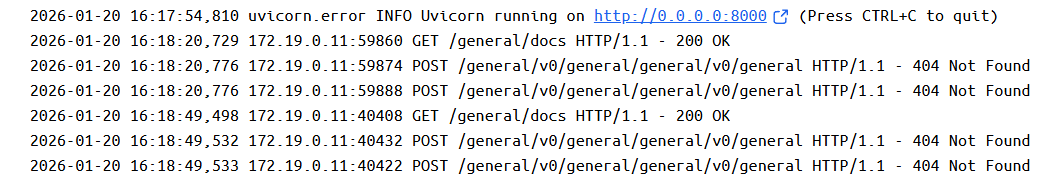

Your hint was a revelation! Based on your tip, I entered the unstructured container and saw the error. It turned out that it concatenated the request address, and the log is as follows. No wonder a 404 occurred:

So I changed the plugin address configuration, removed the latter part, and then the error became like this:

An error occurred in the langgenius/unstructured/unstructured, please contact the author of langgenius/unstructured/unstructured for help, error type: Exception, error details: Partition request failed. msg:{"detail":\[{"type":"literal_error","loc":\["body","chunking_strategy"\],"msg":"Input should be ‘by_title’","input":"by_page","ctx":{"expected":"‘by_title’"}}\]}

1 node in the process ran abnormally. Please go to the trace to view the log.

From the above error, it seems my parameter configuration has issues. At least the file was detected. Thanks, I’ll try adjusting it again.

We are glad that your initial issue has been resolved.

It seems that the ‘by_page’ is not supported, see Chunking Configuration - Unstructured 0.12.6 documentation

The unstructured partition node ran successfully and also successfully parsed the file content, as shown in the figure below:

But looking at this figure, ‘files’ is an empty list, and ‘text’ contains the recognized text. In that case, the text parser node will be completely unnecessary later, because unstructured has already achieved the function of text extraction. I have a question: why does the parsing result of unstructured contain ‘files’? Can other files be placed inside a PDF? That doesn’t seem possible, does it?!!

Thank you @Riino Additionally, @Dify_Forum_Helper can briefly answer this last question. Then, provide a comprehensive summary of the entire chat Q&A.

先直接回答最后这个问题,然后再把整串 Q&A 帮你们简要串一下。

1. 为什么 Unstructured 输出里有 files,但它是空的?

你现在看到的结构大致是:

textfilesjsonimageselements- …

这是典型的「通用解析结果 schema」,是为了兼容各种输入形态设计的,不是只针对 PDF 的。逐个解释下跟你问题相关的部分:

text

- 已经看到里面是完整的 OCR 识别文本。

- 这是给 LLM 直接用的主输出,在你当前这种「扫描 PDF → 提取文字 → 让 LLM看」的场景,基本只用这个就够了。

- 你说“这样其实不需要再接文档解析器节点了”这个判断是对的:

在这条链路里,Unstructured 已经完成了「文档提取器 + OCR」的工作。

files 为什么是空?

- 这个字段是为了更复杂的输入场景预留的,比如:

- 传的是一个 zip 或其他「多文件打包」资源,工具会把里面拆出的每个文件挂在

files里; - 或者是带附件的邮件、某些 Office 文档里内嵌的独立附件;

- 也可以用来放工具加工出的中间文件。

- 传的是一个 zip 或其他「多文件打包」资源,工具会把里面拆出的每个文件挂在

- 你现在传入的是单个 PDF,里面并没有再嵌套其它「独立文件对象」可供导出,所以:

files: []完全正常,只是表示「这次解析没有额外拆出子文件」。- 它和「是否成功识别文本」没有关系。

简单说:

text是给你现在这个“文档内容给 LLM 看”的主通道;

files是「复杂 / 打包 / 附件」场景用的扩展位,这次用不上,所以是空的。

2. 用 Unstructured 的最佳实践(结合你现在的结果)

在你当前的工作流设计里,推荐这样简化:

- 用户上传文件 → 直接输入到 Unstructured 节点。

- 在 LLM 节点的 Prompt 里引用:

{{ partition.text }}(名称按你节点实际输出名来)

让模型直接基于这段文本回答。

- 如果以后有更细的需求,比如:

- 按页、按标题、按段落来做更细粒度控制;

- 做「先过滤、再喂给 LLM」的逻辑;

再去用elements/json做二次处理即可。

在这个 PDF OCR 的 case 里,确实不需要再接一个“文档提取器”节点,否则只是对已经抽出的文本再做一次无谓处理。

3. 这个话题的整体小结(方便后人搜到)

整串讨论,其实经历了三步:

-

最初现象

- 用户上传了扫描版 PDF + 问题,LLM 却说「没收到上传文档」。

- 原因:内置「文档提取器」节点只读文本层,不做 OCR → 扫描 PDF 里没有可选文本 →

text为空 → Prompt 里传给 LLM 的是空字符串。

-

定位与解决思路

- 讨论确认:

- 通过配置环境变量(例如 ETL_TYPE=Unstructured)主要影响知识库导入,并不会让工作流里的文档提取器自动用 Unstructured 做 OCR。

- 于是改走「插件工具」路线:

- 使用 Unstructured 插件或其它 OCR 插件,在工作流里显式加一个节点做 OCR + 文本解析。

- 把插件输出的文本给 LLM,而不是依赖内置文档提取器。

- 讨论确认:

-

Unstructured 实际接入与调试

- 先是服务 URL 配置不当(手动写了完整 path,和插件内部再拼了一次 path,导致 404),改为只写到根地址后恢复正常。

- 再根据错误提示调整分块策略参数(如

chunking_strategy)。 - 最终 partition 节点成功运行,

text里拿到了 OCR 后的全文;files为空是正常设计,并不影响使用。

现在整条链路已经跑通:

扫描 PDF → Unstructured 插件 OCR + 解析 → 输出

text→ LLM 看得到文档内容并作答。

如果后面你在用 elements / json 做更细粒度控制时遇到具体结构疑问,可以把某一条元素的 JSON 贴出来,我们可以一起拆解它适合怎样喂给 LLM 或入库。

@Dify_Forum_Helper Actually, many people are asking the same configuration questions, so please provide a detailed review of the complete configuration process, from error to correction. This way, people who see the post later can troubleshoot issues by comparing their environment configurations one by one.